At NVIDIA GTC 2026, Jensen Huang walked onto the stage alongside a Disney humanoid robot and described a world where every company will build AI, and every industry will run on it. He wasn't talking about chatbots or dashboards. He was talking about Physical AI — machines that see, reason, and act inside real factories, in real time. For manufacturing, this is the most consequential technology shift since the PLC. And it starts with a question most Indian factories cannot yet answer: do you know what your machines are doing right now?

What NVIDIA GTC 2026 Actually Announced for Manufacturing

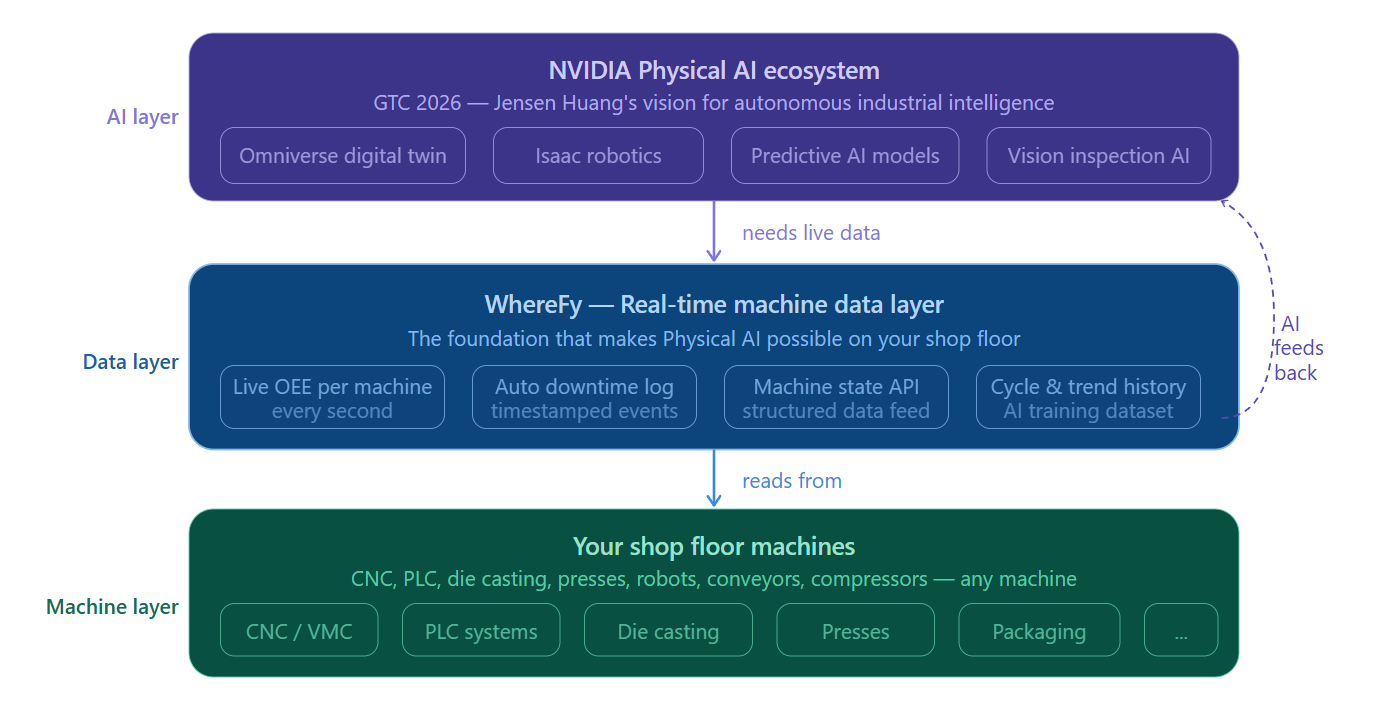

NVIDIA's GTC 2026 conference — held in San Jose in March 2026 — was not a GPU launch event. It was a declaration that AI has crossed from software into the physical world. Jensen Huang introduced the concept of Physical AI: AI systems that don't just process text or images, but operate within and interact with real physical environments — factory floors, warehouses, logistics networks, assembly lines.

Official Source

NVIDIA GTC 2026 — Jensen Huang Keynote & Physical AI Announcements

nvidia.com/gtc

The key announcements relevant to manufacturing included NVIDIA's Omniverse platform for factory simulation and digital twins, the Isaac robotics platform for autonomous industrial robots, and the concept of "AI factories" — data centres and software stacks dedicated entirely to running continuous AI inference inside physical operations. The underlying message was stark: AI is no longer something you deploy in IT. It is something that runs on your production line.

$1T+

NVIDIA's projected market for Physical AI in industrial operations by 2030

40%

OEE improvement potential when AI is trained on real-time machine data vs. historical logs

2026

The year Physical AI moves from pilot programs to production deployments in manufacturing

Physical AI Is Here. But It Has a Data Problem.

Here's what the keynote presentations at GTC 2026 didn't show enough of: what happens before the AI runs.

Every AI system — whether it's a vision model detecting defects, a robot navigating a factory floor, or a predictive maintenance algorithm watching motor vibration — needs data. Not historical data from last quarter's report. Not manual log entries. It needs live, continuous, machine-generated data from the exact moment something happens.

And here is the uncomfortable truth for most Indian manufacturers: they don't have it. The majority of Indian factories — from auto component plants in Rajkot to die casting units in Pune to printing facilities in Delhi — are still running on paper logs, shift-end summaries, and Excel sheets that are 6 to 24 hours old by the time anyone reads them.

"AI can move from observing operations to truly understanding them — but only when it has a reliable source of operational truth."

— The core challenge Physical AI must solve in manufacturing

You cannot run Physical AI on paper. NVIDIA's Omniverse can simulate your factory perfectly — but the simulation is only as good as the real-time data feeding it. Jensen Huang's vision of autonomous AI factories requires a continuous stream of machine state data: what is running, what is idle, what has faulted, what is running slow, what is about to fail. That data layer has to come from somewhere. In a WhereFy-connected plant, it already exists.

The 5 Ways Physical AI Will Change Your Shop Floor — And What You Need Ready

1. AI-Powered Defect Detection at the Machine

Vision AI systems — cameras connected to inference models — can now detect surface defects, dimensional errors, and assembly mistakes in real time, at production speed. NVIDIA's Metropolis platform for manufacturing vision is already being deployed in automotive and electronics plants globally. But these systems need to know machine state to function correctly: Is the machine running? What part number is loaded? What is the cycle count? That context comes from machine monitoring.

What you need ready

Live machine state data per cycle — exactly what WhereFy provides via PLC or sensor connection. Without it, your vision AI cannot distinguish a defect from a normal part made at reduced speed.

2. Autonomous Mobile Robots on the Shop Floor

NVIDIA's Isaac platform and partner robots from companies like Boston Dynamics and Agility are moving into production environments — carrying materials, feeding machines, removing finished goods. These robots navigate using a combination of sensor fusion and AI-generated maps of the factory. Critically, they need to know which machines are active and which are in setup or down — so they can prioritize material delivery to running machines and avoid idle ones.

What you need ready

A real-time machine availability feed that robots and their control systems can query. WhereFy's API-accessible data layer makes this possible without any custom development.

3. Predictive Maintenance Powered by AI Models

GTC 2026 featured multiple sessions on AI-driven predictive maintenance — models trained on thousands of machine hours that can identify failure patterns weeks before a breakdown occurs. Companies like Siemens and Rockwell Automation are embedding these models into their platforms. But the training data for these models must come from real machines, in real operating conditions. Historical paper logs are useless. Real-time sensor streams are the input.

What you need ready

Continuous machine data streams — current draw, cycle time trends, temperature readings, vibration signatures — logged automatically over weeks and months. WhereFy builds this historical data set from day one of installation.

4. Digital Twins That Reflect Reality

NVIDIA's Omniverse platform allows manufacturers to build photorealistic, physics-accurate digital twins of their entire factory. These twins can be used to simulate process changes, test new layouts, and train robots in simulation before deploying them on the floor. But a digital twin that isn't continuously updated with real operating data is just an expensive 3D model. The twin becomes genuinely useful when it reflects what the real factory is doing right now — which machine is running which part, at what speed, in which state.

What you need ready

A live data feed from every machine that can update the digital twin in real time. WhereFy's continuous data stream is exactly the kind of input NVIDIA's Omniverse connectors are designed to consume.

5. Closed-Loop AI That Improves Itself

The most advanced Physical AI systems described at GTC 2026 are not just reactive — they are adaptive. They observe outcomes, compare them against expected results, and update their operating parameters automatically. A CNC monitoring AI that detects a cycle time increase can trigger a tool wear alert, log the event, update the maintenance schedule, and notify the next-shift operator — without any human intervention. This closed loop requires an unbroken chain of real-time data from machine to AI model to action.

What you need ready

Automated, timestamped machine event data that AI models can consume as structured inputs. WhereFy logs every state change — start, stop, idle, fault, speed deviation — with millisecond-level timestamps that AI systems can parse directly.

Why Indian Manufacturers Are Actually Well-Positioned — If They Act Now

There is a temptation to read news from GTC 2026 and assume that Physical AI is a technology for Tesla, BMW, and Amazon — not for a 50-machine auto parts plant in Rajkot or a die casting unit in Ahmedabad. That assumption is wrong, and it is dangerous.

Indian manufacturing has a structural advantage that most Western factories do not: it has not yet locked itself into expensive, legacy automation architectures that are now obsolete. Indian manufacturers who start building their real-time data foundation now — before the AI layer arrives — will be able to adopt Physical AI tools faster and cheaper than their Western counterparts who have to untangle decades of proprietary systems first.

The window for this advantage is roughly 24 to 36 months. After that, the cost of not having a real-time data layer will compound — in lost competitiveness, in missed AI adoption, and in the widening gap between digital and non-digital manufacturers.

Paper-Based Plant vs. WhereFy-Connected Plant: AI Readiness

| Capability | Paper / Manual | WhereFy Connected |

|---|---|---|

| Real-time machine state data | ✗ Not available | ✓ Every machine, live |

| AI vision system context input | ✗ Manual tagging required | ✓ Automatic via API |

| Digital twin live sync | ✗ Manual updates only | ✓ Continuous data feed |

| Predictive maintenance training data | ✗ Paper logs — unusable | ✓ Months of structured history |

| Robot material delivery optimization | ✗ No machine state signal | ✓ Live availability feed |

| Closed-loop AI improvement | ✗ No feedback loop | ✓ Timestamped event stream |

| Audit trail for AI decisions | ✗ Unverifiable | ✓ Immutable machine log |

What WhereFy Customers Get Right Now — Before the AI Arrives

You don't need to wait for humanoid robots or NVIDIA's Isaac platform to start benefiting from a real-time machine data foundation. WhereFy-connected plants are already experiencing the immediate, measurable benefits that also happen to be exactly the foundation that Physical AI requires.

Live OEE Per Machine, Per Shift

Every machine reports its state — Running, Idle, Setup, Down — in real time. No operator input. No shift-end summary. A production manager in Rajkot can see the OEE of every CNC in their facility from their phone, in real time, right now.

Automatic Downtime Capture

Every stoppage — planned or unplanned — is timestamped and logged automatically. Micro-stoppages under 2 minutes that paper never captures are recorded. This data, accumulated over months, is the training set for your future AI maintenance models.

Instant Alerts That Find You

When Machine 7 stops unexpectedly, or a cycle time exceeds threshold, WhereFy sends an alert via phone or email within seconds. No floor walk required. Response time drops from hours to minutes.

Shift Reports Generated Automatically

End-of-shift reports are generated and delivered automatically — no supervisor compilation, no Excel copy-paste. The data is machine-verified and timestamped, making it audit-ready and AI-consumable.

Multi-Plant Visibility on One Dashboard

Whether you have 2 machines or 200, one plant or five, WhereFy gives management a single view. This is the data layer that future AI orchestration systems will plug directly into.

lightbulbWhereFy Insight

The manufacturers who will benefit most from Physical AI are not the ones who wait for the technology to fully mature. They are the ones who spend the next 12–18 months building a clean, continuous, machine-generated data foundation. WhereFy is that foundation — and it pays for itself in recovered production long before the AI arrives.

The Practical Roadmap: From Paper Logs to Physical AI

This is not a 5-year transformation. The path from where most Indian manufacturers are today to where NVIDIA's GTC 2026 vision points is a structured 3-phase journey that WhereFy is already helping customers execute.

Connect & See

Connect your existing machines to WhereFy via PLC, Modbus, OPC UA, or direct sensor inputs. Eliminate paper logs. Begin collecting real-time OEE, downtime, and cycle time data automatically. This phase alone typically recovers implementation cost within 60–90 days through production improvements.

Analyse & Optimise

With months of verified machine data accumulating, shift from reactive to proactive management. Identify chronic downtime causes. Benchmark machines against each other. Begin predictive maintenance scheduling based on actual cycle counts and operational patterns — not time-based calendars.

AI-Enable & Scale

With a clean, structured, historical data foundation in place, your factory is ready to integrate AI tools — vision systems, autonomous maintenance models, robot coordination, digital twin synchronisation. Your WhereFy data becomes the live input that Physical AI tools from NVIDIA's ecosystem can directly consume.

The Bottom Line: The AI Future Starts with Data Today

NVIDIA GTC 2026 showed us where manufacturing is going. Autonomous robots. Vision-based quality systems. Self-optimising production lines. AI that understands not just what happened, but why — and acts accordingly.

But every single one of those capabilities requires the same foundation: continuous, real-time, machine-generated data from the shop floor.

The manufacturers who have that foundation when Physical AI arrives will adopt it in months. Those who don't will spend years building the data layer before they can even begin. The race is not for the AI tools. The race is for the data that feeds them.

WhereFy exists to make sure Indian manufacturers win that race.

Ready to build your Physical AI foundation?

Connect your first machine in days. See live OEE, downtime, and cycle data immediately. And start building the data layer that NVIDIA's Physical AI future requires.